Spaces:

Runtime error

Runtime error

CaglarAytekin

commited on

Commit

·

b9ba714

1

Parent(s):

1e7a8d6

first commit

Browse files- Causality_Example.png +0 -0

- DATA.py +198 -0

- DEMO.py +97 -0

- LEURN.py +695 -0

- LICENSE +201 -0

- Presentation_Product.pdf +0 -0

- Presentation_Technical.pdf +0 -0

- README.md +27 -13

- TRAINER.py +186 -0

- app.py +176 -0

- requirements.txt +7 -0

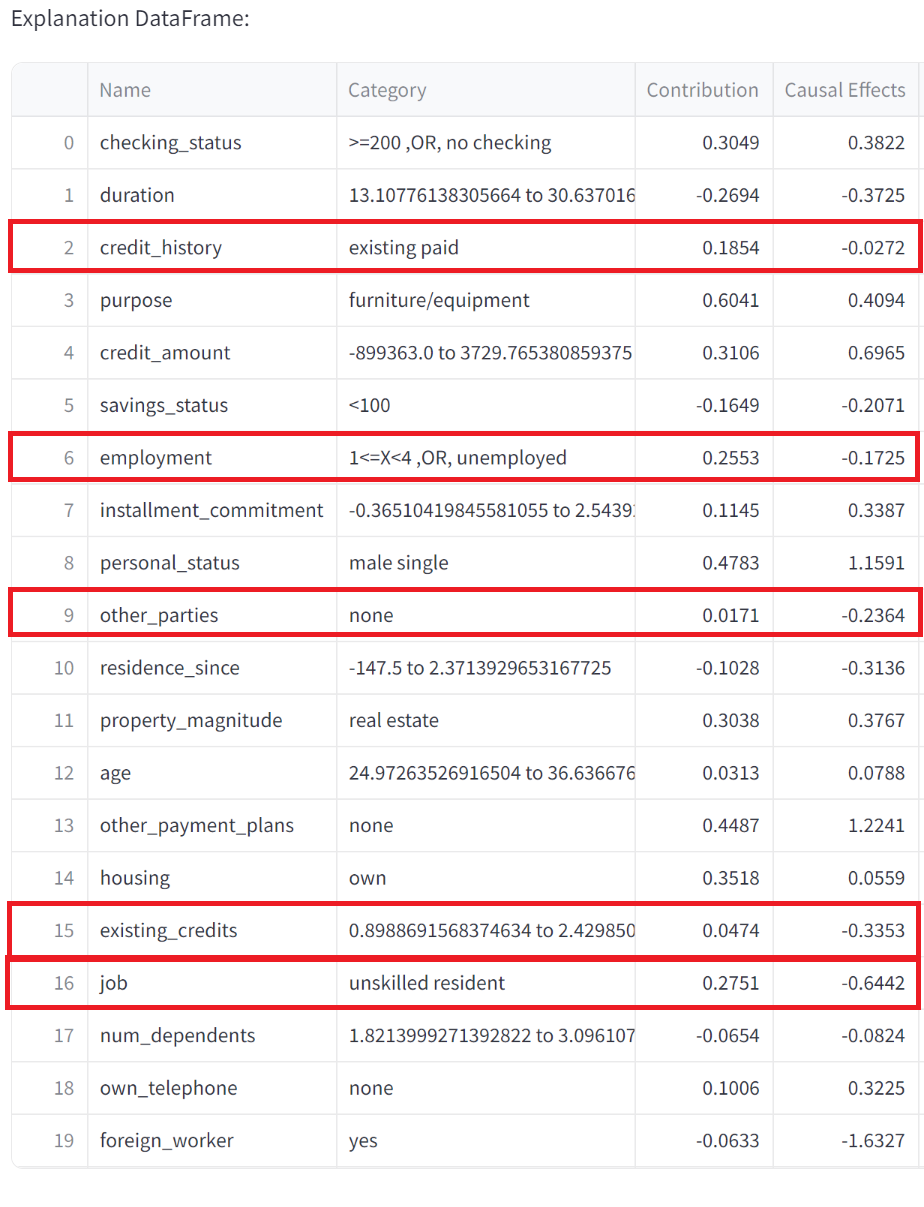

Causality_Example.png

ADDED

|

DATA.py

ADDED

|

@@ -0,0 +1,198 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

@author: Caglar Aytekin

|

| 3 |

+

contact: caglar@deepcause.ai

|

| 4 |

+

"""

|

| 5 |

+

|

| 6 |

+

import numpy as np

|

| 7 |

+

from sklearn.preprocessing import LabelEncoder, MinMaxScaler

|

| 8 |

+

import warnings

|

| 9 |

+

from sklearn.model_selection import train_test_split

|

| 10 |

+

import torch

|

| 11 |

+

import pandas as pd

|

| 12 |

+

pd.set_option('display.max_rows', None) # None means show all rows

|

| 13 |

+

pd.set_option('display.max_columns', None) # None means show all columns

|

| 14 |

+

pd.set_option('display.width', None) # Use appropriate width to display columns

|

| 15 |

+

pd.set_option('display.max_colwidth', None) # Show full content of each column

|

| 16 |

+

|

| 17 |

+

warnings.filterwarnings("ignore")

|

| 18 |

+

|

| 19 |

+

def split_and_processing(X,y,categoricals,output_type,attribute_names):

|

| 20 |

+

#If every entryin a column of a dataframe is None drop it

|

| 21 |

+

columns_to_keep_mask = ~X.isna().all()

|

| 22 |

+

X = X.dropna(axis=1, how='all')

|

| 23 |

+

# Update the categoricals list to reflect the columns not dropped

|

| 24 |

+

categoricals = [cat for cat, keep in zip(categoricals, columns_to_keep_mask) if keep]

|

| 25 |

+

attribute_names= [cat for cat, keep in zip(attribute_names, columns_to_keep_mask) if keep]

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

# Split into train and remaining

|

| 30 |

+

X_train, X_remaining, y_train, y_remaining = train_test_split(X, y, test_size=0.2, random_state=42)

|

| 31 |

+

|

| 32 |

+

# Split remaining into validation and test

|

| 33 |

+

X_val, X_test, y_val, y_test = train_test_split(X_remaining, y_remaining, test_size=0.5, random_state=42)

|

| 34 |

+

|

| 35 |

+

# Initialize preprocessor

|

| 36 |

+

preprocessor=DataProcessor(categoricals,output_type)

|

| 37 |

+

|

| 38 |

+

#Fit and transform for training set

|

| 39 |

+

X_train=torch.from_numpy(preprocessor.fit_transform_X(X_train).values).float()

|

| 40 |

+

y_train=torch.from_numpy(preprocessor.fit_transform_y(y_train)).float()

|

| 41 |

+

if output_type<2:

|

| 42 |

+

y_train=y_train.unsqueeze(dim=-1)

|

| 43 |

+

else:

|

| 44 |

+

y_train=y_train.long()

|

| 45 |

+

|

| 46 |

+

#Transform for validation and test set

|

| 47 |

+

X_val=torch.from_numpy(preprocessor.transform_X(X_val).values).float()

|

| 48 |

+

y_val=torch.from_numpy(preprocessor.transform_y(y_val)).float()

|

| 49 |

+

if output_type<2:

|

| 50 |

+

y_val=y_val.unsqueeze(dim=-1)

|

| 51 |

+

else:

|

| 52 |

+

y_val=y_val.long()

|

| 53 |

+

|

| 54 |

+

X_test=torch.from_numpy(preprocessor.transform_X(X_test).values).float()

|

| 55 |

+

y_test=torch.from_numpy(preprocessor.transform_y(y_test)).float()

|

| 56 |

+

if output_type<2:

|

| 57 |

+

y_test=y_test.unsqueeze(dim=-1)

|

| 58 |

+

else:

|

| 59 |

+

y_test=y_test.long()

|

| 60 |

+

|

| 61 |

+

preprocessor.attribute_names=attribute_names

|

| 62 |

+

preprocessor.output_type=output_type

|

| 63 |

+

|

| 64 |

+

#Determine class no

|

| 65 |

+

if output_type==0:

|

| 66 |

+

output_dim=y_train.shape[1]

|

| 67 |

+

elif output_type==1:

|

| 68 |

+

output_dim=1

|

| 69 |

+

else:

|

| 70 |

+

output_dim=len(np.unique(y_train))

|

| 71 |

+

|

| 72 |

+

preprocessor.output_dim=output_dim

|

| 73 |

+

return X_train,X_val,X_test,y_train,y_val,y_test,preprocessor

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

class DataProcessor:

|

| 78 |

+

def __init__(self, categoricals, output_type):

|

| 79 |

+

self.categoricals = categoricals

|

| 80 |

+

self.output_type = output_type

|

| 81 |

+

self.label_encoders = {}

|

| 82 |

+

self.scaler = MinMaxScaler(feature_range=(-1, 1))

|

| 83 |

+

self.target_scaler = MinMaxScaler(feature_range=(-1, 1))

|

| 84 |

+

self.most_common_categories = {}

|

| 85 |

+

self.target_encoder = None # For binary and multiclass

|

| 86 |

+

self.unique_targets = None # To store unique targets for binary classification

|

| 87 |

+

self.category_details=[]

|

| 88 |

+

self.suggested_embeddings=None

|

| 89 |

+

self.encoders_for_nn={}

|

| 90 |

+

|

| 91 |

+

def fit_transform_X(self, X):

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

# Convert all numerical columns to float precision

|

| 95 |

+

X.iloc[:, ~np.array(self.categoricals)] = X.iloc[:, ~np.array(self.categoricals)].astype(float)

|

| 96 |

+

X.iloc[:, np.array(self.categoricals)] = X.iloc[:, np.array(self.categoricals)].astype(str)

|

| 97 |

+

|

| 98 |

+

X_transformed = X.copy()

|

| 99 |

+

for i, is_categorical in enumerate(self.categoricals):

|

| 100 |

+

if is_categorical:

|

| 101 |

+

encoder = LabelEncoder()

|

| 102 |

+

X_transformed.iloc[:, i] = encoder.fit_transform(X.iloc[:, i])

|

| 103 |

+

self.label_encoders[i] = encoder

|

| 104 |

+

self.encoders_for_nn[X_transformed.columns[i]] = dict(zip(encoder.classes_, encoder.transform(encoder.classes_)))

|

| 105 |

+

self.most_common_categories[i] = X.iloc[:, i].mode()[0]

|

| 106 |

+

self.category_details.append((i, len(encoder.classes_)))

|

| 107 |

+

else:

|

| 108 |

+

# Fill missing values with the median for numerical columns

|

| 109 |

+

X_transformed.iloc[:, i] = X.iloc[:, i].fillna(X.iloc[:, i].median())

|

| 110 |

+

|

| 111 |

+

# Scale numerical features

|

| 112 |

+

numerical_features = X_transformed.iloc[:, ~np.array(self.categoricals)]

|

| 113 |

+

if numerical_features.shape[-1]>0:

|

| 114 |

+

self.scaler.fit(numerical_features)

|

| 115 |

+

X_transformed.iloc[:, ~np.array(self.categoricals)] = self.scaler.transform(numerical_features)

|

| 116 |

+

self.suggested_embeddings=[max(2, int(np.log2(x[1]))) for x in self.category_details]

|

| 117 |

+

|

| 118 |

+

return X_transformed.astype(float)

|

| 119 |

+

|

| 120 |

+

def transform_X(self, X):

|

| 121 |

+

X.iloc[:, np.array(self.categoricals)] = X.iloc[:, np.array(self.categoricals)].astype(str)

|

| 122 |

+

X_transformed = X.copy()

|

| 123 |

+

for i, is_categorical in enumerate(self.categoricals):

|

| 124 |

+

if is_categorical:

|

| 125 |

+

encoder = self.label_encoders[i]

|

| 126 |

+

# Transform categories, replace unseen with most common category

|

| 127 |

+

X_transformed.iloc[:, i] = X.iloc[:, i].map(lambda x: x if x in encoder.classes_ else self.most_common_categories[i])

|

| 128 |

+

X_transformed.iloc[:, i] = encoder.transform(X_transformed.iloc[:, i])

|

| 129 |

+

else:

|

| 130 |

+

X_transformed.iloc[:, i] = X.iloc[:, i].fillna(X.iloc[:, i].mean())

|

| 131 |

+

|

| 132 |

+

# Scale numerical features

|

| 133 |

+

numerical_features = X_transformed.iloc[:, ~np.array(self.categoricals)]

|

| 134 |

+

if numerical_features.shape[-1]>0:

|

| 135 |

+

X_transformed.iloc[:, ~np.array(self.categoricals)] = self.scaler.transform(numerical_features)

|

| 136 |

+

|

| 137 |

+

return X_transformed.astype(float)

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

def inverse_transform_X(self, sample):

|

| 141 |

+

#inverse transform from pytorch tensor

|

| 142 |

+

sample=sample.detach().numpy()

|

| 143 |

+

sample_inverse_transformed = pd.DataFrame(sample.copy())

|

| 144 |

+

|

| 145 |

+

#Handle numerical features

|

| 146 |

+

numerical_features_indices = np.where(~np.array(self.categoricals))[0]

|

| 147 |

+

if len(numerical_features_indices)>0:

|

| 148 |

+

sample_inverse_transformed.iloc[:,numerical_features_indices] = self.scaler.inverse_transform(sample[:,numerical_features_indices])

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

for i, is_categorical in enumerate(self.categoricals):

|

| 152 |

+

if is_categorical:

|

| 153 |

+

encoder = self.label_encoders[i]

|

| 154 |

+

sample_inverse_transformed.iloc[:, i] = encoder.inverse_transform(sample[:, i].astype('int'))

|

| 155 |

+

sample_inverse_transformed.columns = self.attribute_names

|

| 156 |

+

return sample_inverse_transformed

|

| 157 |

+

|

| 158 |

+

|

| 159 |

+

def fit_transform_y(self, y):

|

| 160 |

+

if self.output_type == 0: # Regression

|

| 161 |

+

y_transformed = self.target_scaler.fit_transform(y.values.reshape(-1, 1)).flatten()

|

| 162 |

+

elif self.output_type == 1: # Binary classification

|

| 163 |

+

self.unique_targets = y.unique()

|

| 164 |

+

mapping = {category: idx for idx, category in enumerate(self.unique_targets)}

|

| 165 |

+

y_transformed = y.map(mapping).astype(int).values

|

| 166 |

+

elif self.output_type == 2: # Multiclass classification

|

| 167 |

+

self.target_encoder = LabelEncoder()

|

| 168 |

+

y_transformed = self.target_encoder.fit_transform(y)

|

| 169 |

+

else:

|

| 170 |

+

raise ValueError("Invalid output type")

|

| 171 |

+

return y_transformed

|

| 172 |

+

|

| 173 |

+

def transform_y(self, y):

|

| 174 |

+

if self.output_type == 0: # Regression

|

| 175 |

+

y_transformed = self.target_scaler.transform(y.values.reshape(-1, 1)).flatten()

|

| 176 |

+

elif self.output_type == 1: # Binary classification

|

| 177 |

+

mapping = {category: idx for idx, category in enumerate(self.unique_targets)}

|

| 178 |

+

y_transformed = y.map(mapping).astype(int).values

|

| 179 |

+

elif self.output_type == 2: # Multiclass classification

|

| 180 |

+

y_transformed = self.target_encoder.transform(y)

|

| 181 |

+

else:

|

| 182 |

+

raise ValueError("Invalid output type")

|

| 183 |

+

return y_transformed

|

| 184 |

+

|

| 185 |

+

def inverse_transform_y(self, nn_output):

|

| 186 |

+

if self.output_type == 0: # Regression

|

| 187 |

+

y_transformed=nn_output.squeeze().detach().numpy()

|

| 188 |

+

return self.target_scaler.inverse_transform(y_transformed.reshape(-1, 1)).flatten()

|

| 189 |

+

elif self.output_type == 1: # Binary classification

|

| 190 |

+

y_transformed=int(np.round(torch.sigmoid(nn_output).squeeze().detach().numpy()))

|

| 191 |

+

inverse_mapping = {idx: category for idx, category in enumerate(self.unique_targets)}

|

| 192 |

+

return inverse_mapping[y_transformed]

|

| 193 |

+

elif self.output_type == 2: # Multiclass classification

|

| 194 |

+

y_transformed=int(np.round(torch.argmax(nn_output).squeeze().detach().numpy()))

|

| 195 |

+

return self.target_encoder.inverse_transform([y_transformed])

|

| 196 |

+

else:

|

| 197 |

+

raise ValueError("Invalid output type")

|

| 198 |

+

|

DEMO.py

ADDED

|

@@ -0,0 +1,97 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

@author: Caglar Aytekin

|

| 3 |

+

contact: caglar@deepcause.ai

|

| 4 |

+

"""

|

| 5 |

+

# %% IMPORT

|

| 6 |

+

from LEURN import LEURN

|

| 7 |

+

import torch

|

| 8 |

+

from DATA import split_and_processing

|

| 9 |

+

from TRAINER import Trainer

|

| 10 |

+

import numpy as np

|

| 11 |

+

import openml

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

#DEMO FOR CREDIT SCORING DATASET: OPENML ID : 31

|

| 16 |

+

#MORE INFO: https://www.openml.org/search?type=data&sort=runs&id=31&status=active

|

| 17 |

+

#%% Set Neural Network Hyperparameters

|

| 18 |

+

depth=2

|

| 19 |

+

batch_size=1024

|

| 20 |

+

lr=5e-3

|

| 21 |

+

epochs=300

|

| 22 |

+

droprate=0.

|

| 23 |

+

output_type=1 #0: regression, 1: binary classification, 2: multi-class classification

|

| 24 |

+

|

| 25 |

+

#%% Check if CUDA is available and set the device accordingly

|

| 26 |

+

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

| 27 |

+

print("Using device:", device)

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

#%% Load the dataset

|

| 31 |

+

#Read dataset from openml

|

| 32 |

+

open_ml_dataset_id=1590

|

| 33 |

+

dataset = openml.datasets.get_dataset(open_ml_dataset_id)

|

| 34 |

+

X, y, categoricals, attribute_names = dataset.get_data(target=dataset.default_target_attribute)

|

| 35 |

+

#Alternatively load your own dataset from another source (excel,csv etc)

|

| 36 |

+

#Be mindful that X and y should be dataframes, categoricals is a boolean list indicating categorical features, attribute_names is a list of feature names

|

| 37 |

+

|

| 38 |

+

# %% Process data, save useful statistics

|

| 39 |

+

X_train,X_val,X_test,y_train,y_val,y_test,preprocessor=split_and_processing(X,y,categoricals,output_type,attribute_names)

|

| 40 |

+

|

| 41 |

+

#%% Initialize model, loss function, optimizer, and learning rate scheduler

|

| 42 |

+

model = LEURN(preprocessor, depth=depth,droprate=droprate).to(device)

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

#%%Train model

|

| 46 |

+

model_trainer=Trainer(model, X_train, X_val, y_train, y_val,lr=lr,batch_size=batch_size,epochs=epochs,problem_type=output_type)

|

| 47 |

+

model_trainer.train()

|

| 48 |

+

#Load best weights

|

| 49 |

+

model.load_state_dict(torch.load('best_model_weights.pth'))

|

| 50 |

+

|

| 51 |

+

#%%Evaluate performance

|

| 52 |

+

perf=model_trainer.evaluate(X_train, y_train)

|

| 53 |

+

perf=model_trainer.evaluate(X_test, y_test)

|

| 54 |

+

perf=model_trainer.evaluate(X_val, y_val)

|

| 55 |

+

|

| 56 |

+

#%%TESTS

|

| 57 |

+

model.eval()

|

| 58 |

+

|

| 59 |

+

#%%Check sample in original format:

|

| 60 |

+

print(preprocessor.inverse_transform_X(X_test[0:1]))

|

| 61 |

+

#%% Explain single example

|

| 62 |

+

Exp_df_test_sample,result,result_original_format=model.explain(X_test[0:1])

|

| 63 |

+

#%% Check results

|

| 64 |

+

print(result,result_original_format)

|

| 65 |

+

#%% Check explanation

|

| 66 |

+

print(Exp_df_test_sample)

|

| 67 |

+

#%% Influences

|

| 68 |

+

effects=model.influence_matrix()

|

| 69 |

+

new_list = [a for c, a in zip(categoricals, attribute_names) if c]+[a for c, a in zip(categoricals, attribute_names) if not(c)]

|

| 70 |

+

torch.argmax(effects,dim=1)

|

| 71 |

+

global_importances=model.global_importance()

|

| 72 |

+

#%% tests

|

| 73 |

+

#model output and sum of contributions should be the same

|

| 74 |

+

print(result,model.output,model(X_test[0:1]),Exp_df_test_sample['Contribution'].values.sum())

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

#%% GENERATION FROM SAME CATEGORY

|

| 78 |

+

generated_sample_nn_friendly, generated_sample_original_input_format,output=model.generate_from_same_category(X_test[0:1])

|

| 79 |

+

#%%Check sample in original format:

|

| 80 |

+

print(preprocessor.inverse_transform_X(X_test[0:1]))

|

| 81 |

+

print(generated_sample_original_input_format)

|

| 82 |

+

#%% Explain single example

|

| 83 |

+

Exp_df_generated_sample,result,result_original_format=model.explain(generated_sample_nn_friendly)

|

| 84 |

+

print(Exp_df_generated_sample)

|

| 85 |

+

print(Exp_df_test_sample.equals(Exp_df_generated_sample)) #this should be true

|

| 86 |

+

|

| 87 |

+

|

| 88 |

+

#%% GENERATE FROM SCRATCH

|

| 89 |

+

generated_sample_nn_friendly, generated_sample_original_input_format,output=model.generate()

|

| 90 |

+

Exp_df_generated_sample,result,result_original_format=model.explain(generated_sample_nn_friendly)

|

| 91 |

+

print(Exp_df_generated_sample)

|

| 92 |

+

print(result,result_original_format)

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

|

| 97 |

+

|

LEURN.py

ADDED

|

@@ -0,0 +1,695 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

@author: Caglar Aytekin

|

| 3 |

+

contact: caglar@deepcause.ai

|

| 4 |

+

"""

|

| 5 |

+

import torch

|

| 6 |

+

import torch.nn as nn

|

| 7 |

+

import random

|

| 8 |

+

import numpy as np

|

| 9 |

+

import pandas as pd

|

| 10 |

+

import copy

|

| 11 |

+

class CustomEncodingFunction(torch.autograd.Function):

|

| 12 |

+

@staticmethod

|

| 13 |

+

def forward(ctx, x, tau,alpha):

|

| 14 |

+

ctx.save_for_backward(x, tau)

|

| 15 |

+

# Perform the tanh operation on (x + tau)

|

| 16 |

+

y = torch.tanh(x + tau)

|

| 17 |

+

# The actual forward output : binarized output

|

| 18 |

+

forward_output = alpha * (2 * torch.round((y + 1) / 2) - 1) + (1-alpha)*y

|

| 19 |

+

return forward_output

|

| 20 |

+

|

| 21 |

+

@staticmethod

|

| 22 |

+

def backward(ctx, grad_output):

|

| 23 |

+

x, tau = ctx.saved_tensors

|

| 24 |

+

# Use the derivative of tanh for the backward pass: 1 - tanh^2(x + tau)

|

| 25 |

+

grad_input = grad_output * (1 - torch.tanh(x + tau) ** 2)

|

| 26 |

+

return grad_input, grad_input,None # Assuming tau also requires gradient

|

| 27 |

+

|

| 28 |

+

# Wrapping the custom function in a nn.Module for easier use

|

| 29 |

+

class EncodingLayer(nn.Module):

|

| 30 |

+

def __init__(self):

|

| 31 |

+

super(EncodingLayer, self).__init__()

|

| 32 |

+

def forward(self, x, tau,alpha):

|

| 33 |

+

return CustomEncodingFunction.apply(x, tau,alpha)

|

| 34 |

+

|

| 35 |

+

class LEURN(nn.Module):

|

| 36 |

+

def __init__(self, preprocessor,depth,droprate):

|

| 37 |

+

"""

|

| 38 |

+

Initializes the model.

|

| 39 |

+

|

| 40 |

+

Parameters:

|

| 41 |

+

- preprocessor: A class containing useful info about the dataset

|

| 42 |

+

- Including: attribute names, categorical features details, suggested embedding size for each category, output type, output dimension, transformation information

|

| 43 |

+

- depth: Depth of the network

|

| 44 |

+

- droprate: dropout rate

|

| 45 |

+

"""

|

| 46 |

+

super(LEURN, self).__init__()

|

| 47 |

+

|

| 48 |

+

#Find categorical indices and category numbers for each

|

| 49 |

+

self.alpha=1.0

|

| 50 |

+

self.preprocessor=preprocessor

|

| 51 |

+

self.attribute_names=preprocessor.attribute_names

|

| 52 |

+

self.label_encoders=preprocessor.encoders_for_nn

|

| 53 |

+

self.categorical_indices = [info[0] for info in preprocessor.category_details]

|

| 54 |

+

self.num_categories = [info[1] for info in preprocessor.category_details]

|

| 55 |

+

|

| 56 |

+

#If embedding_size is integer, cast it to all categories

|

| 57 |

+

if isinstance(preprocessor.suggested_embeddings, int):

|

| 58 |

+

embedding_sizes = [preprocessor.suggested_embeddings] * len(self.categorical_indices)

|

| 59 |

+

else:

|

| 60 |

+

assert len(preprocessor.suggested_embeddings) == len(self.categorical_indices), "Length of embedding_size must match number of categorical features"

|

| 61 |

+

embedding_sizes = preprocessor.suggested_embeddings

|

| 62 |

+

|

| 63 |

+

self.embedding_sizes=embedding_sizes

|

| 64 |

+

|

| 65 |

+

#Embedding layers for categorical features

|

| 66 |

+

self.embeddings = nn.ModuleList([

|

| 67 |

+

nn.Embedding(num_categories, embedding_dim)

|

| 68 |

+

for num_categories, embedding_dim in zip(self.num_categories, embedding_sizes)

|

| 69 |

+

])

|

| 70 |

+

|

| 71 |

+

for embedding_now in self.embeddings:

|

| 72 |

+

nn.init.uniform_(embedding_now.weight, -1.0, 1.0)

|

| 73 |

+

|

| 74 |

+

self.total_embedding_size = sum(embedding_sizes) #number of categorical features for NN

|

| 75 |

+

self.non_cat_input_dim = len(self.attribute_names) - len(self.categorical_indices) #Number of numerical features for NN

|

| 76 |

+

self.nn_input_dim = self.total_embedding_size + self.non_cat_input_dim #Number of features for NN

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

#LAYERS

|

| 80 |

+

|

| 81 |

+

self.tau_initial = nn.Parameter(torch.zeros(1,self.nn_input_dim)) # Initial tau as a learnable parameter

|

| 82 |

+

self.layers = nn.ModuleList()

|

| 83 |

+

self.depth = depth

|

| 84 |

+

self.output_type=preprocessor.output_type

|

| 85 |

+

|

| 86 |

+

for d_now in range(depth):

|

| 87 |

+

# Each iteration adds an encoding layer followed by a dropout and then a linear layer

|

| 88 |

+

self.layers.append(EncodingLayer())

|

| 89 |

+

self.layers.append(nn.Dropout1d(droprate))

|

| 90 |

+

linear_layer = nn.Linear((d_now + 1) * self.nn_input_dim, self.nn_input_dim)

|

| 91 |

+

self._init_weights(linear_layer,d_now+1) #special layer initialization

|

| 92 |

+

self.layers.append(linear_layer)

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

# Final stage: dropout and linear layer

|

| 96 |

+

self.final_dropout=nn.Dropout1d(droprate)

|

| 97 |

+

self.final_linear = nn.Linear(depth * self.nn_input_dim, self.preprocessor.output_dim)

|

| 98 |

+

self._init_weights(self.final_linear, depth)

|

| 99 |

+

|

| 100 |

+

def set_alpha(self, alpha):

|

| 101 |

+

"""Method to update the dynamic parameter."""

|

| 102 |

+

self.alpha = alpha

|

| 103 |

+

|

| 104 |

+

def _init_weights(self, layer,depth_now):

|

| 105 |

+

# Custom initialization

|

| 106 |

+

# Considering the binary (-1,1) nature of the input,

|

| 107 |

+

# when we initialize layer in (-1/dim,1/dim) range, output is bounded at (-1,1)

|

| 108 |

+

# Knowing our input is roughly at (-1,1) range, this serves as good initialization for tau

|

| 109 |

+

|

| 110 |

+

if not(self.embedding_sizes==[]):

|

| 111 |

+

init_tensor = torch.tensor([1/size for size in self.embedding_sizes for _ in range(size)])

|

| 112 |

+

if init_tensor.shape[0]<self.nn_input_dim: #Means we have numericals too

|

| 113 |

+

init_tensor=torch.cat((init_tensor, torch.ones(self.non_cat_input_dim)), dim=0)

|

| 114 |

+

else:

|

| 115 |

+

init_tensor = torch.ones(self.non_cat_input_dim)

|

| 116 |

+

|

| 117 |

+

init_tensor=init_tensor/((depth_now+1)*torch.tensor(len(self.attribute_names)))

|

| 118 |

+

init_tensor=init_tensor.unsqueeze(0).repeat_interleave(repeats=layer.weight.shape[0],dim=0).repeat_interleave(repeats=depth_now,dim=1)

|

| 119 |

+

layer.weight.data.uniform_(-1, 1)

|

| 120 |

+

layer.weight=torch.nn.Parameter(layer.weight*init_tensor)

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

def forward(self, x):

|

| 124 |

+

# Defines forward function for provided input: Normalizes numericals, embeds categoricals, and gives to neural network.

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

# Separate categorical and numerical features for easier handling

|

| 128 |

+

cat_features = [x[:, i].long() for i in self.categorical_indices]

|

| 129 |

+

non_cat_features = [x[:, i] for i in range(x.size(1)) if i not in self.categorical_indices]

|

| 130 |

+

non_cat_features = torch.stack(non_cat_features, dim=1) if non_cat_features else x.new_empty(x.size(0), 0)

|

| 131 |

+

|

| 132 |

+

# Embed categoricals

|

| 133 |

+

embedded_features = [embedding(cat_feature) for embedding, cat_feature in zip(self.embeddings, cat_features)]

|

| 134 |

+

# Combine categoricals and numericals

|

| 135 |

+

try:

|

| 136 |

+

embedded_features = torch.cat(embedded_features, dim=1)

|

| 137 |

+

nninput = torch.cat([embedded_features, non_cat_features], dim=1)

|

| 138 |

+

except:

|

| 139 |

+

nninput=non_cat_features

|

| 140 |

+

|

| 141 |

+

self.nninput=nninput

|

| 142 |

+

|

| 143 |

+

# Forward pass neural network

|

| 144 |

+

output=self.forward_from_embeddings(self.nninput)

|

| 145 |

+

self.output=output

|

| 146 |

+

return output

|

| 147 |

+

|

| 148 |

+

def forward_from_embeddings(self,x):

|

| 149 |

+

# Forward function for normalized numericals and embedded categoricals

|

| 150 |

+

tau=self.tau_initial

|

| 151 |

+

tau=torch.repeat_interleave(tau,x.shape[0],0) #tau is 1xF, cast it for batch

|

| 152 |

+

# For each depth

|

| 153 |

+

for i in range(0, self.depth * 3, 3):

|

| 154 |

+

# encode, drop and find next tau

|

| 155 |

+

encoding_layer = self.layers[i]

|

| 156 |

+

dropout_layer = self.layers[i + 1]

|

| 157 |

+

linear_layer = self.layers[i + 2]

|

| 158 |

+

#encode and drop

|

| 159 |

+

encoded_x =dropout_layer( encoding_layer(x, tau,self.alpha))

|

| 160 |

+

#save encodings and thresholds

|

| 161 |

+

#notice that threshold is -tau, not tau since we binarize x+tau

|

| 162 |

+

if i==0:

|

| 163 |

+

encodings=encoded_x

|

| 164 |

+

taus=-tau

|

| 165 |

+

else:

|

| 166 |

+

encodings=torch.cat((encodings,encoded_x),dim=-1)

|

| 167 |

+

taus=torch.cat((taus,-tau),dim=-1)

|

| 168 |

+

#find next thresholds

|

| 169 |

+

tau = linear_layer(encodings) #not used, redundant for last layer

|

| 170 |

+

|

| 171 |

+

self.encodings=encodings

|

| 172 |

+

self.taus=taus

|

| 173 |

+

#Final layer: drop and linear

|

| 174 |

+

output=self.final_linear(self.final_dropout(encodings))

|

| 175 |

+

|

| 176 |

+

return output

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

def find_boundaries(self, x):

|

| 180 |

+

"""

|

| 181 |

+

Given input, find boundaries for numerical features and valid categories for categorical features

|

| 182 |

+

Can accept unnormalized and not embedded input - set embedding False

|

| 183 |

+

"""

|

| 184 |

+

# Ensure x is the correct shape [1, input_dim]

|

| 185 |

+

if x.ndim == 1:

|

| 186 |

+

x = x.unsqueeze(0) # Add batch dimension if not present

|

| 187 |

+

|

| 188 |

+

# Perform a forward pass to update self.encodings and self.taus

|

| 189 |

+

# to update self.taus

|

| 190 |

+

|

| 191 |

+

self(x)

|

| 192 |

+

|

| 193 |

+

# self.taus has the shape [1, depth * input_dim]

|

| 194 |

+

# reshape to [depth, input_dim] for easier boundary finding

|

| 195 |

+

taus_reshaped = self.taus.view(self.depth, self.nn_input_dim)

|

| 196 |

+

|

| 197 |

+

# embedded and normalized input

|

| 198 |

+

embedded_x=self.nninput

|

| 199 |

+

|

| 200 |

+

# Initialize boundaries - numericals are in (-1,1) range and categoricals are from embeddings.

|

| 201 |

+

# So -100,100 is safe min and max. -inf,+inf is not chosen since problematic for later sampling

|

| 202 |

+

upper_boundaries = torch.full((embedded_x.size(1),), 100.0)

|

| 203 |

+

lower_boundaries = torch.full((embedded_x.size(1),), -100.0)

|

| 204 |

+

|

| 205 |

+

# Compare each threshold in self.taus with the corresponding input value

|

| 206 |

+

for feature_index in range(self.nn_input_dim):

|

| 207 |

+

for depth_index in range(self.depth):

|

| 208 |

+

threshold = taus_reshaped[depth_index, feature_index]

|

| 209 |

+

input_value = embedded_x[0, feature_index]

|

| 210 |

+

|

| 211 |

+

# If the threshold is greater than the input value and less than the current upper boundary, update the upper boundary

|

| 212 |

+

if threshold > input_value and threshold < upper_boundaries[feature_index]:

|

| 213 |

+

upper_boundaries[feature_index] = threshold

|

| 214 |

+

|

| 215 |

+

# If the threshold is less than the input value and greater than the current lower boundary, update the lower boundary

|

| 216 |

+

if threshold < input_value and threshold > lower_boundaries[feature_index]:

|

| 217 |

+

lower_boundaries[feature_index] = threshold

|

| 218 |

+

|

| 219 |

+

# Convert boundaries to a list of tuples [(lower, upper), ...] for each feature

|

| 220 |

+

boundaries = list(zip(lower_boundaries.tolist(), upper_boundaries.tolist()))

|

| 221 |

+

|

| 222 |

+

|

| 223 |

+

self.upper_boundaries=upper_boundaries

|

| 224 |

+

self.lower_boundaries=lower_boundaries

|

| 225 |

+

|

| 226 |

+

|

| 227 |

+

return boundaries

|

| 228 |

+

|

| 229 |

+

def categories_within_boundaries(self):

|

| 230 |

+

"""

|

| 231 |

+

For each categorical feature, checks if embedding weights fall within the specified upper and lower boundaries.

|

| 232 |

+

Returns a dictionary with categorical feature indices as keys and lists of category indices that fall within the boundaries.

|

| 233 |

+

"""

|

| 234 |

+

categories_within_bounds = {}

|

| 235 |

+

emb_st=0

|

| 236 |

+

for cat_index, emb_layer in zip(range(len(self.categorical_indices)), self.embeddings):

|

| 237 |

+

# Extract upper and lower boundaries for this categorical feature

|

| 238 |

+

lower_bound=self.lower_boundaries[emb_st:emb_st+self.embedding_sizes[cat_index]]

|

| 239 |

+

upper_bound=self.upper_boundaries[emb_st:emb_st+self.embedding_sizes[cat_index]]

|

| 240 |

+

emb_st=emb_st+self.embedding_sizes[cat_index]

|

| 241 |

+

# Initialize list to hold categories that fall within boundaries

|

| 242 |

+

categories_within = []

|

| 243 |

+

|

| 244 |

+

# Iterate over each embedding vector in the layer

|

| 245 |

+

for i, weight in enumerate(emb_layer.weight):

|

| 246 |

+

# Check if the embedding weight falls within the boundaries

|

| 247 |

+

if torch.all(weight >= lower_bound) and torch.all(weight <= upper_bound):

|

| 248 |

+

categories_within.append(i) # Using index i as category identifier

|

| 249 |

+

|

| 250 |

+

# Store the categories that fall within the boundaries for this feature

|

| 251 |

+

categories_within_bounds[cat_index] = categories_within

|

| 252 |

+

|

| 253 |

+

return categories_within_bounds

|

| 254 |

+

|

| 255 |

+

def global_importance(self):

|

| 256 |

+

final_layer_weight=torch.clone(self.final_linear.weight).detach().numpy()

|

| 257 |

+

importances=np.sum(np.abs(final_layer_weight),0)

|

| 258 |

+

importances=importances.reshape(importances.shape[0]//self.nn_input_dim,self.nn_input_dim)

|

| 259 |

+

importances=np.sum(importances,0)

|

| 260 |

+

importances_features=[]

|

| 261 |

+

st=0

|

| 262 |

+

for i in range(len(self.attribute_names)):

|

| 263 |

+

try:

|

| 264 |

+

importances_features.append(np.sum(importances[st:st+self.embedding_sizes[i]]))

|

| 265 |

+

st=st+self.embedding_sizes[i]

|

| 266 |

+

except:

|

| 267 |

+

|

| 268 |

+

st=st+1

|

| 269 |

+

return np.argsort(importances_features)[::-1],np.sort(importances_features)[::-1]

|

| 270 |

+

|

| 271 |

+

def influence_matrix(self):

|

| 272 |

+

"""

|

| 273 |

+

Finds ADG from how each feature effects other's threshold via weight matrices

|

| 274 |

+

"""

|

| 275 |

+

|

| 276 |

+

def create_block_sum_matrix(sizes, matrix):

|

| 277 |

+

L = len(sizes)

|

| 278 |

+

# Initialize the output matrix with zeros, using PyTorch

|

| 279 |

+

block_sum_matrix = torch.zeros((L, L))

|

| 280 |

+

|

| 281 |

+

# Define the starting row and column indices for slicing

|

| 282 |

+

start_row = 0

|

| 283 |

+

for i, row_size in enumerate(sizes):

|

| 284 |

+

start_col = 0

|

| 285 |

+

for j, col_size in enumerate(sizes):

|

| 286 |

+

# Calculate the sum of the current block using PyTorch

|

| 287 |

+

block_sum = torch.sum(matrix[start_row:start_row+row_size, start_col:start_col+col_size])

|

| 288 |

+

block_sum_matrix[i, j] = block_sum

|

| 289 |

+

# Update the starting column index for the next block in the row

|

| 290 |

+

start_col += col_size

|

| 291 |

+

# Update the starting row index for the next block in the column

|

| 292 |

+

start_row += row_size

|

| 293 |

+

|

| 294 |

+

return block_sum_matrix

|

| 295 |

+

|

| 296 |

+

def add_ones_until_target(initial_list, target_sum):

|

| 297 |

+

# Continue adding 1s until the sum of the list equals the target sum

|

| 298 |

+

while sum(initial_list) < target_sum:

|

| 299 |

+

initial_list.append(1)

|

| 300 |

+

return initial_list

|

| 301 |

+

|

| 302 |

+

for i in range(0, self.depth * 3, 3):

|

| 303 |

+

# encode, drop and find next tau

|

| 304 |

+

weight_now=self.layers[i + 2].weight

|

| 305 |

+

weight_now_reshaped=weight_now.reshape((weight_now.shape[0], weight_now.shape[1]//self.nn_input_dim,self.nn_input_dim)) #shape: output x depth x input

|

| 306 |

+

if i==0:

|

| 307 |

+

# effects=np.sum(np.abs(weight_now_reshaped.numpy()),axis=1)/self.depth #shape: output x input

|

| 308 |

+

effects=torch.sum(torch.abs(weight_now_reshaped), dim=1) / self.depth

|

| 309 |

+

else:

|

| 310 |

+

effects=effects+torch.sum(torch.abs(weight_now_reshaped), dim=1) / self.depth

|

| 311 |

+

|

| 312 |

+

effects=effects.t() #shape: input x output

|

| 313 |

+

|

| 314 |

+

modified_list = add_ones_until_target(copy.deepcopy(self.embedding_sizes), effects.shape[0])

|

| 315 |

+

|

| 316 |

+

|

| 317 |

+

effects=create_block_sum_matrix(modified_list,effects)

|

| 318 |

+

|

| 319 |

+

return effects

|

| 320 |

+

|

| 321 |

+

|

| 322 |

+

def explain_without_causal_effects(self,x):

|

| 323 |

+

"""

|

| 324 |

+

Explains decisions of the neural network for input sample.

|

| 325 |

+

For numericals, extracts upper and lower boundaries on the sample

|

| 326 |

+

For categoricals displays possible categories

|

| 327 |

+

Also calculates contributions of each feature to final result

|

| 328 |

+

"""

|

| 329 |

+

self.find_boundaries(x) #find upper, lower boundaries for all nn inputs

|

| 330 |

+

|

| 331 |

+

#find valid categories for categorical features

|

| 332 |

+

valid_categories=self.categories_within_boundaries()

|

| 333 |

+

|

| 334 |

+

#numerical boundaries

|

| 335 |

+

upper_numerical=self.upper_boundaries[sum(self.embedding_sizes):].detach().numpy()

|

| 336 |

+

lower_numerical=self.lower_boundaries[sum(self.embedding_sizes):].detach().numpy()

|

| 337 |

+

|

| 338 |

+

#Find contribution from each feature in final linear layer, distribute bias evenly

|

| 339 |

+

contributions=self.encodings * self.final_linear.weight + self.final_linear.bias.unsqueeze(dim=-1)/self.final_linear.weight.shape[1]

|

| 340 |

+

contributions=contributions.detach().resize_((contributions.shape[0], contributions.shape[1]//self.nn_input_dim,self.nn_input_dim))

|

| 341 |

+

contributions=torch.sum(contributions,dim=1)

|

| 342 |

+

|

| 343 |

+

# Initialize an empty list to store the summed contributions

|

| 344 |

+

summed_contributions = []

|

| 345 |

+

|

| 346 |

+

# Initialize start index for slicing

|

| 347 |

+

start_idx = 0

|

| 348 |

+

|

| 349 |

+

#Sum contribution of each categorical within respective embedding

|

| 350 |

+

for size in self.embedding_sizes:

|

| 351 |

+

# Calculate end index for the current chunk

|

| 352 |

+

end_idx = start_idx + size

|

| 353 |

+

|

| 354 |

+

# Sum the contributions in the current chunk

|

| 355 |

+

chunk_sum = contributions[:, start_idx:end_idx].sum(dim=1, keepdim=True)

|

| 356 |

+

|

| 357 |

+

# Append the summed chunk to the list

|

| 358 |

+

summed_contributions.append(chunk_sum)

|

| 359 |

+

|

| 360 |

+

# Update the start index for the next chunk

|

| 361 |

+

start_idx = end_idx

|

| 362 |

+

|

| 363 |

+

# If there are remaining elements not covered by embedding_sizes, add them as is (numerical features)

|

| 364 |

+

if start_idx < contributions.shape[1]:

|

| 365 |

+

remaining = contributions[:, start_idx:]

|

| 366 |

+

summed_contributions.append(remaining)

|

| 367 |

+

|

| 368 |

+

# Concatenate the summed contributions back into a tensor

|

| 369 |

+

summed_contributions = torch.cat(summed_contributions, dim=1)

|

| 370 |

+

# This is to handle multi-class explanations, for binary this is 0 automatically

|

| 371 |

+

# Note: multi-output regression is not supported yet. This will just return largest regressed value's explanations

|

| 372 |

+

highest_index=torch.argmax(summed_contributions.sum(dim=1))

|

| 373 |

+

# This is contribution from each feature

|

| 374 |

+

result=summed_contributions[highest_index]

|

| 375 |

+

self.result=result

|

| 376 |

+

|

| 377 |

+

#Explanation and Contribution formats are in ordered format (categoricals first, numericals later)

|

| 378 |

+

#Bring them to original format in user input

|

| 379 |

+

#Combine categoricals and numericals explanations and contributions

|

| 380 |

+

Explanation = [None] * (len(self.categorical_indices) + len(upper_numerical))

|

| 381 |

+

Contribution = np.zeros((len(self.categorical_indices) + len(upper_numerical),))

|

| 382 |

+

|

| 383 |

+

# Fill in the categorical samples

|

| 384 |

+

for j, cat_index in enumerate(self.categorical_indices):

|

| 385 |

+

Explanation[cat_index] = valid_categories[j]

|

| 386 |

+

Contribution[cat_index] = result[j].numpy()

|

| 387 |

+

|

| 388 |

+

|

| 389 |

+

#INVERSE TRANSFORM PART 1-------------------------------------------------------------------------------------------

|

| 390 |

+

#Inverse transform upper and lower_numericals

|

| 391 |

+

len_num=len(upper_numerical)

|

| 392 |

+

if len_num>0:

|

| 393 |

+

upper_numerical=self.preprocessor.scaler.inverse_transform(upper_numerical.reshape(1,-1))

|

| 394 |

+

lower_numerical=self.preprocessor.scaler.inverse_transform(lower_numerical.reshape(1,-1))

|

| 395 |

+

if len_num>1:

|

| 396 |

+

upper_numerical=np.squeeze(upper_numerical)

|

| 397 |

+

lower_numerical=np.squeeze(lower_numerical)

|

| 398 |

+

upper_iter = iter(upper_numerical)

|

| 399 |

+

lower_iter = iter(lower_numerical)

|

| 400 |

+

|

| 401 |

+

|

| 402 |

+

cnt=0

|

| 403 |

+

for i in range(len(Explanation)):

|

| 404 |

+

if Explanation[i] is None:

|

| 405 |

+

#Note the denormalization here

|

| 406 |

+

Explanation[i] = next(lower_iter),next(upper_iter)

|

| 407 |

+

if len(self.categorical_indices)>0:

|

| 408 |

+

Contribution[i] = result[j+cnt+1].numpy()

|

| 409 |

+

else:

|

| 410 |

+

Contribution[i] = result[cnt].numpy()