Abstract

Research by leading AI organizations focuses more on pre-deployment stages like model alignment and testing over deployment issues such as bias, with significant gaps existing in high-risk areas like healthcare and finance.

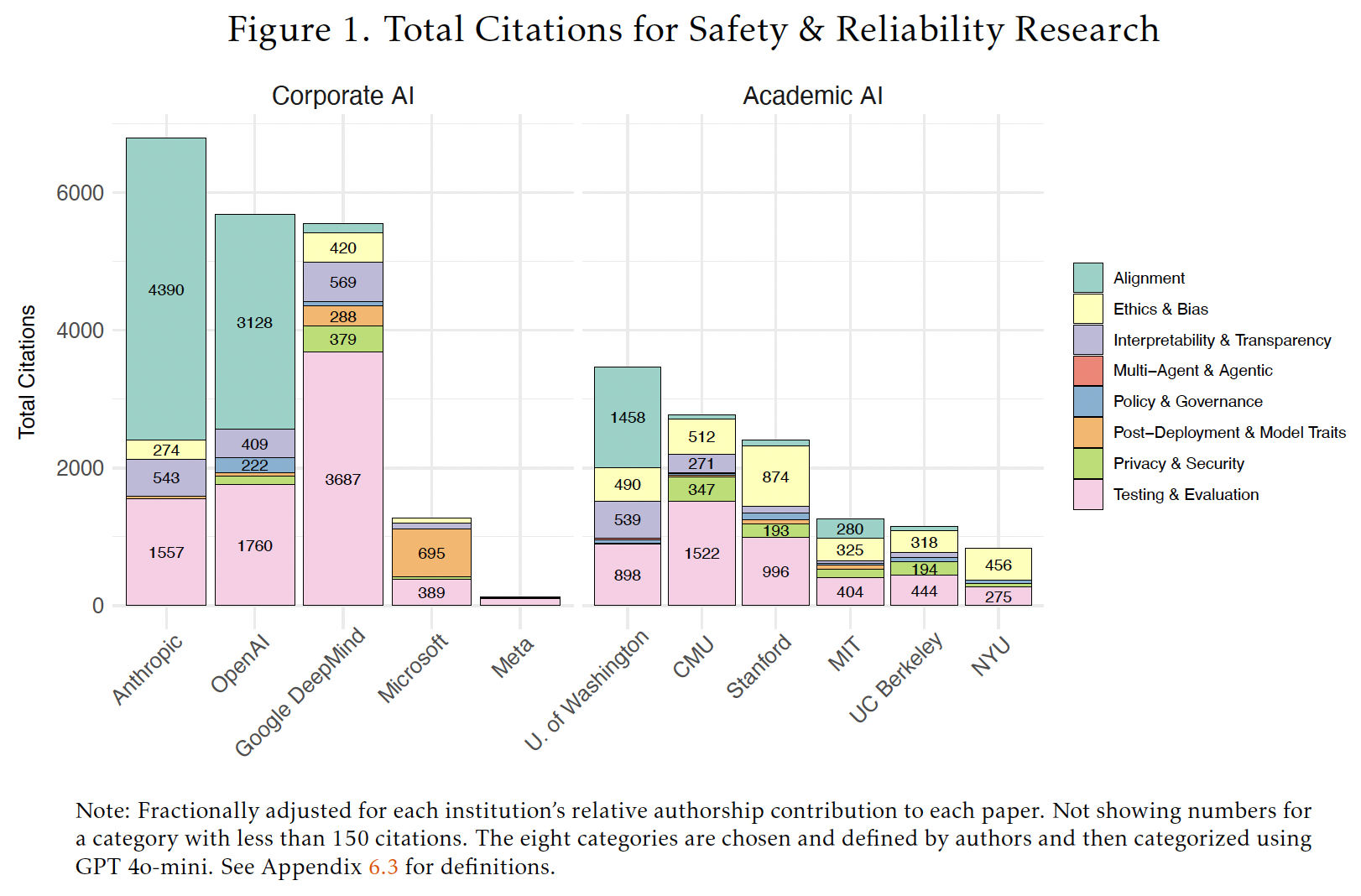

Drawing on 1,178 safety and reliability papers from 9,439 generative AI papers (January 2020 - March 2025), we compare research outputs of leading AI companies (Anthropic, Google DeepMind, Meta, Microsoft, and OpenAI) and AI universities (CMU, MIT, NYU, Stanford, UC Berkeley, and University of Washington). We find that corporate AI research increasingly concentrates on pre-deployment areas -- model alignment and testing & evaluation -- while attention to deployment-stage issues such as model bias has waned. Significant research gaps exist in high-risk deployment domains, including healthcare, finance, misinformation, persuasive and addictive features, hallucinations, and copyright. Without improved observability into deployed AI, growing corporate concentration could deepen knowledge deficits. We recommend expanding external researcher access to deployment data and systematic observability of in-market AI behaviors.

Community

Real-World Gaps in AI Governance Research: AI safety and reliability in everyday deployments

by Ilan Strauss, Isobel Moure, Tim O’Reilly and Sruly Rosenblat

AI Disclosures Project (Social Science Research Council)

Drawing on 1,178 safety and reliability papers from 9,439 generative AI papers (January 2020 – March 2025), we compare research outputs of leading AI companies (Anthropic, Google DeepMind, Meta, Microsoft, and OpenAI) and AI universities (CMU, MIT, NYU, Stanford, UC Berkeley, and University of Washington). We find that corporate AI research increasingly concentrates on pre-deployment areas — model alignment and testing & evaluation — while attention to deployment-stage issues such as

model bias has waned. Significant research gaps exist in high-risk deployment domains, including healthcare, finance, misinformation, persuasive and addictive features, hallucinations, and copyright. Without improved observability into deployed AI, growing corporate concentration could deepen knowledge deficits. We recommend expanding external researcher access to deployment data and systematic observability of in-market AI behaviors.

Models citing this paper 0

No model linking this paper

Datasets citing this paper 1

Spaces citing this paper 0

No Space linking this paper